Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

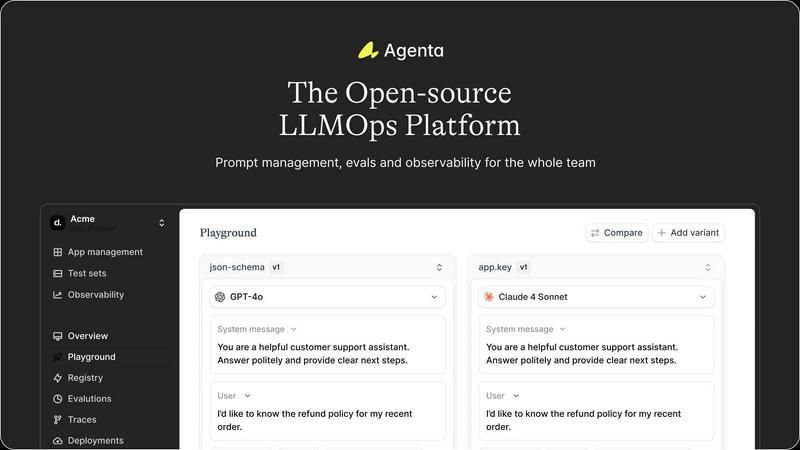

Agenta transforms LLM development with a centralized hub for prompt management, team collaboration, and real-time.

Last updated: February 26, 2026

Benchmark over 100 LLMs for your specific task in minutes, comparing cost, speed, quality, and stability with zero setup needed.

Last updated: March 26, 2026

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Centralized Prompt Management

Agenta offers a unified platform where prompts, evaluations, and traces are stored in one place. This centralization eliminates the chaos caused by scattered documents and communication channels, enabling teams to collaborate more effectively.

Automated Evaluation System

No more guesswork in your experiments! Agenta's automated evaluation feature allows teams to create a systematic process for running experiments, tracking results, and validating every change, ensuring data-driven decisions.

Comprehensive Observability Tools

Debugging made easy! With Agenta, trace every request and pinpoint the exact failure points in your AI systems. Annotate traces with your team and gather user feedback, transforming any trace into a test with just a click.

Collaborative Workflow Integration

Bring everyone into the fold! Agenta enables product managers, developers, and domain experts to collaborate seamlessly. With a user-friendly interface, domain experts can experiment with prompts without touching code, while PMs can run evaluations directly from the platform.

OpenMark AI

Effortless Task Description

No coding skills? No problem! Just describe your task in everyday language, and OpenMark AI takes care of the rest. This feature lets you focus on what matters—getting accurate results without the technical hiccups.

Real-Time Model Comparison

Why settle for outdated marketing numbers? With OpenMark AI, you get side-by-side results from actual API calls to models. This real-time comparison helps you understand performance and cost efficiency like never before.

Consistency Checks

Will your model perform like a rock star every time? OpenMark AI lets you run the same task multiple times to check for consistency. This feature ensures that you can trust the outputs, making your deployment decisions solid and reliable.

Comprehensive Cost Analysis

Cost efficiency is key when choosing an AI model. OpenMark AI provides a detailed breakdown of costs per request, allowing you to analyze quality relative to price. This way, you can focus on value rather than just the cheapest option available.

Use Cases

Agenta

Streamlined AI Development

Agenta is perfect for AI teams looking to streamline their development process. By centralizing workflows, teams can focus on innovation rather than getting bogged down by scattered resources and ineffective communication.

Enhanced Prompt Experimentation

With Agenta, teams can iterate on prompts collaboratively. The unified playground allows users to compare models and prompts side-by-side, ensuring that the best choices are made based on data rather than intuition.

Improved Debugging Processes

When things go awry, Agenta shines. Its observability tools make it easy for teams to trace issues back to their source, making debugging a straightforward and efficient process instead of a game of chance.

Efficient Collaboration Across Teams

Agenta breaks down silos between product managers, developers, and domain experts. By providing a centralized platform for collaboration, it enhances communication and speeds up the decision-making process, leading to faster product iterations.

OpenMark AI

Model Selection for New Features

Before launching a new AI feature, use OpenMark AI to identify which model best suits your task requirements. This ensures that you pick a model that aligns perfectly with your goals and user expectations.

Pre-deployment Testing

Want to validate your AI model before going live? Run benchmarks on OpenMark AI to see how different models perform under various conditions. This pre-deployment testing minimizes risks and maximizes reliability.

Cost Optimization Strategies

Are you looking to cut costs while maintaining quality? OpenMark AI helps you analyze the cost-effectiveness of different models, allowing you to choose one that delivers the best performance for your budget.

Research and Development

For teams involved in AI research, OpenMark AI serves as an invaluable tool. It allows you to experiment with multiple models, gain insights into their capabilities, and refine your approach based on real-world data.

Overview

About Agenta

Agenta is not just another tool; it's a game-changer in the realm of LLMOps. This revolutionary open-source platform takes the chaos out of AI development and replaces it with a streamlined, collaborative experience designed specifically for AI teams. Whether you're a developer or a subject matter expert, Agenta empowers you to break down the silos that often hinder project success. With the ability to experiment with prompts and models, run automated evaluations, and debug production issues with laser-like precision, Agenta tackles the unpredictability of large language models head-on. Centralizing your entire LLM development process, it allows your team to innovate faster, iterate with confidence, and deliver reliable applications that meet real-world demands. Say goodbye to scattered workflows, guesswork, and ineffective collaboration; Agenta is where your LLM game gets elevated, and collaborative innovation truly thrives.

About OpenMark AI

OpenMark AI is where the magic of benchmarking meets the world of AI models! This web application is designed to revolutionize how developers and product teams test their AI models before rolling out new features. Gone are the days of guesswork and uncertainty; with OpenMark AI, you simply describe your task in plain language, and it runs the same prompts against a plethora of models in real-time. You get to see the nitty-gritty details like cost per request, latency, scored quality, and stability across multiple runs. This isn't just about getting a single output; it's about understanding the variance in performance across different scenarios. Perfect for those who are serious about deploying AI solutions, it eliminates the hassle of managing multiple API keys by using hosted benchmarking credits. With a massive catalog of models to test, OpenMark AI empowers you to make informed decisions about which model fits your workflow and budget. Choose wisely, and elevate your AI game!

Frequently Asked Questions

Agenta FAQ

What makes Agenta different from other LLMOps platforms?

Agenta stands out as an open-source solution that centralizes prompt management, evaluation, and observability in a single platform, fostering collaboration and eliminating silos.

Is Agenta suitable for teams of all sizes?

Absolutely! Whether you're a small startup or a large enterprise, Agenta is designed to scale with your needs, making it an ideal choice for any team involved in AI development.

Can I integrate Agenta with my existing tech stack?

Yes, Agenta seamlessly integrates with a variety of frameworks and models, including LangChain, LlamaIndex, and OpenAI, allowing you to work with the tools you already use.

How does Agenta support collaboration among team members?

Agenta provides a user-friendly interface that allows domain experts to experiment with prompts without coding, while product managers can run evaluations directly from the UI, fostering an inclusive and collaborative environment.

OpenMark AI FAQ

How does OpenMark AI ensure accurate benchmarking?

OpenMark AI runs real API calls to the models rather than relying on cached marketing numbers. This approach guarantees that you get accurate and relevant performance data for your specific tasks.

Can I use OpenMark AI without coding skills?

Absolutely! OpenMark AI is designed for everyone. You can describe your tasks in plain language, and the platform handles the technical details, making it accessible for non-developers too.

What types of models can I compare using OpenMark AI?

OpenMark AI boasts a vast catalog of over 100 models, covering various tasks such as classification, translation, data extraction, and more. You can find the right model for virtually any AI application.

Are there any costs associated with using OpenMark AI?

Yes, OpenMark AI operates on a credit-based system for hosted benchmarking. You can start with free credits, and if you need more, there are paid plans available, which are detailed in the in-app billing section.

Alternatives

Agenta Alternatives

Agenta is a cutting-edge open-source LLMOps platform that revolutionizes the way AI teams develop large language models. It brings together prompt management, evaluation, and team collaboration into one slick interface, designed to eliminate the chaos that often surrounds AI development. As teams dive into the world of AI, they often seek alternatives to Agenta for various reasons, including pricing constraints, feature sets that better align with their needs, or the desire for specific platform capabilities that Agenta may not offer. When hunting for an alternative, consider what features are essential for your team’s workflow, how user-friendly the interface is for collaboration, and whether the platform integrates seamlessly with your existing tools. Look for solutions that enhance efficiency, provide robust evaluation tools, and foster an innovative environment where your team can thrive.

OpenMark AI Alternatives

OpenMark AI is your go-to web application for task-level benchmarking of over 100 large language models (LLMs). It’s designed for developers and product teams who need to make smart choices about which AI model to use before launching their features. With OpenMark AI, you input your testing criteria in plain language, and it lets you compare metrics like cost, speed, quality, and consistency, all in one slick session. Users often seek alternatives to OpenMark AI for various reasons, including pricing structures, feature sets, or specific platform integrations. When scouting for an alternative, look for options that offer robust model coverage, transparent pricing, and the ability to benchmark effectively against your unique requirements. The right tool should empower you to make data-driven decisions without the hassle of juggling multiple API keys or dealing with misleading marketing claims.