Agent to Agent Testing Platform vs OpenAIToolsHub

Side-by-side comparison to help you choose the right AI tool.

Agent to Agent Testing Platform

Revolutionize AI agent testing with our platform that ensures compliance and performance across chat, voice, and.

Last updated: February 28, 2026

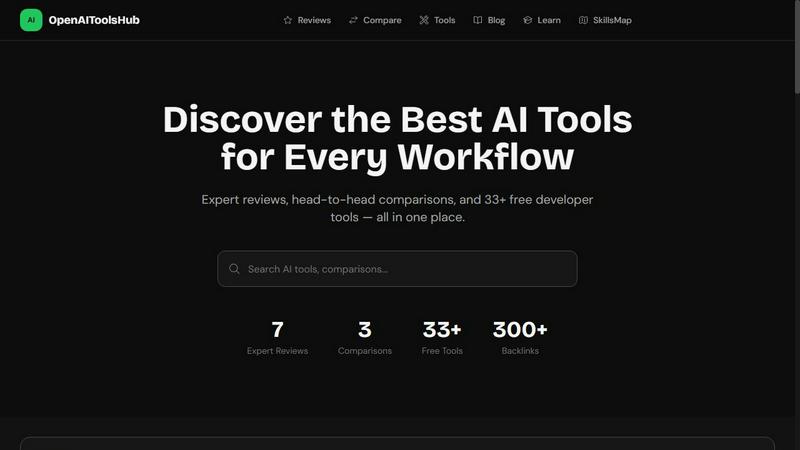

OpenAIToolsHub

Cut through the AI hype with our brutally honest, hands-on reviews and comparisons of every major tool.

Last updated: March 11, 2026

Visual Comparison

Agent to Agent Testing Platform

OpenAIToolsHub

Feature Comparison

Agent to Agent Testing Platform

Automated Scenario Generation

Say goodbye to manual test case creation! Our platform automatically generates diverse test scenarios for AI agents that simulate various interactions, whether it's chat, voice, or phone calls. This comprehensive approach ensures that your AI is tested under a plethora of conditions, catching issues that would otherwise slip through the cracks.

True Multi-Modal Understanding

Don’t limit your testing to just text. With our platform, you can define detailed requirements or upload Product Requirement Documents (PRDs) featuring images, audio, and video inputs. This allows your AI agent to be evaluated against real-world scenarios, ensuring it responds effectively across different media formats.

Autonomous Test Scenario Generation

Leverage our extensive library of pre-defined scenarios or craft custom ones tailored for your specific needs. Test how different agents behave, from personality tone to intent recognition, ensuring your AI delivers the right response in every situation.

Regression Testing with Risk Scoring

Keep your AI agents in check with our end-to-end regression testing feature. It provides insights into risk scoring, highlighting potential areas of concern. This allows you to prioritize critical issues, making your testing efforts more efficient and effective.

OpenAIToolsHub

Hands-On, Unbiased AI Reviews

We don't just skim feature lists—we put tools through their paces in real workflows. Every review is based on actual, gritty testing, with scores reflecting genuine performance, usability, and value. We have a strict no-paid-rankings policy, so you can trust that a 9.5 rating is earned, not bought. Our reviews are kept current, with data validated as of 2026, ensuring you're not reading outdated advice.

The AI SkillsMap for Claude Code

This isn't just a list; it's a thriving ecosystem for AI developers. The SkillsMap is your go-to directory for discovering, installing, and sharing community-built skills for Claude Code. With over 349+ skills cataloged, it's the most comprehensive resource to supercharge your Claude's capabilities, turning it from a generalist into a specialized powerhouse for coding, analysis, and automation.

Free Developer Tool Suite

Get instant access to over 33+ essential, no-frills web tools without any sign-up or account creation. Need to convert JSON to YAML, test a regex, count tokens, or generate a hash? We've got you covered. This toolkit is built for developers, by developers, offering pure utility that just works, saving you from tab-hopping across a dozen different sites.

Head-to-Head Comparison Engine

Can't decide between ChatGPT Plus and Claude Pro? Our comparison tool lets you pit the top AI tools against each other in a direct, feature-by-feature showdown. We break down pricing, strengths, weaknesses, and ideal use cases so you can see the real differences at a glance and make a confident choice without the guesswork.

Use Cases

Agent to Agent Testing Platform

Ensure AI Compliance

Use the platform to validate that your AI agents comply with industry standards and regulations. By simulating various scenarios, you can ensure that your AI behaves ethically and within the guidelines set forth by your organization.

Enhance User Experience

Leverage diverse persona testing to simulate different end-user behaviors. This enables you to evaluate how well your AI performs for a range of demographics, ensuring that it meets the needs of all potential users.

Optimize for Performance

With autonomous synthetic user testing, gather detailed analytics on key performance metrics such as effectiveness, accuracy, empathy, and professionalism. This helps you fine-tune your AI agents for optimal performance before they hit the market.

Risk Mitigation

Identify and address potential risks before they become issues. By conducting regression testing with risk scoring, you can prioritize critical areas of concern and implement fixes, reducing the likelihood of negative user experiences.

OpenAIToolsHub

Choosing the Right AI Subscription

Stuck deciding where to invest your $20 monthly AI budget? Use our detailed reviews and direct comparison engine to see how ChatGPT, Claude, Gemini, and Copilot stack up in 2026 for your specific needs—be it coding, creative writing, or research. Our real pricing data and pros/cons lists eliminate the paralysis of choice.

Supercharging Claude for Development

As a developer using Claude Code, browse the extensive SkillsMap to find and install community-built skills that add new functions, API integrations, or code-generation templates. Instantly expand your AI assistant's toolkit without building from scratch, dramatically boosting your productivity and project capabilities.

Finding a Free, Instant Dev Tool

In the middle of a coding session and need a quick UUID, want to check your markdown formatting, or encode a URL? Skip the app store and bloated online platforms. Navigate to our free tools section for a clean, fast, and ad-free utility that does one job perfectly, right in your browser.

Researching Niche AI Tools for a Project

Launching a new video campaign and need the best AI video generator? Our categorized browsing (Image, Video, Audio, Marketing, etc.) and in-depth expert reviews help you discover and evaluate specialized tools like Runway or Midjourney beyond the mainstream chatbots, providing trusted insights to justify your software spend.

Overview

About Agent to Agent Testing Platform

Welcome to the future of AI testing with the Agent to Agent Testing Platform, the first-ever AI-native quality assurance framework that’s shaking up the game. In a world where AI agents are becoming increasingly autonomous, traditional QA methods just can't cut it anymore. This platform is designed to validate how AI agents function in real-world scenarios, moving beyond basic prompt checks to assess multi-turn conversations across various mediums like chat, voice, and phone interactions. It’s perfect for enterprises that want to ensure their AI agents are ready for prime time. With a robust framework that introduces a dedicated assurance layer, this platform utilizes over 17 specialized AI agents to identify those sneaky long-tail failures and edge cases that manual testing often overlooks. Get ready to roll out your AI agents with confidence, as the platform simulates thousands of realistic interactions, ensuring rigorous validation for policy adherence, traceability, and seamless agent transitions.

About OpenAIToolsHub

Welcome to the no-BS, hype-free zone for AI tools. OpenAIToolsHub is your independent, battle-hardened review platform that cuts through the marketing fluff to deliver raw, honest insights. We're not just another directory; we're a team of developers and power users who get our hands dirty, testing the latest AI tools—from household names like ChatGPT and Claude to the niche gems you haven't heard of yet. Our mission is simple: to give you the unvarnished truth with real ratings, real pricing data, and real-world testing, so you can spend your time and money wisely. Built for developers, content creators, entrepreneurs, and anyone tired of sifting through sponsored "top 10" lists, we offer a powerhouse combo of expert reviews, head-to-head comparisons, a massive library of free developer utilities, and the web's most comprehensive directory for Claude Code skills. If you're making a decision in the chaotic AI landscape, this is your command center.

Frequently Asked Questions

Agent to Agent Testing Platform FAQ

What types of AI agents can be tested using this platform?

The Agent to Agent Testing Platform is designed to test a wide variety of AI agents, including chatbots, voice assistants, and phone caller agents, across multiple scenarios.

How does automated scenario generation work?

Our platform utilizes advanced algorithms to automatically create diverse testing scenarios. This ensures that your AI agents are evaluated under various conditions, mimicking real-world interactions.

Can I integrate this platform with my existing tools?

Absolutely! The platform seamlessly integrates with TestMu AI’s HyperExecute, allowing you to execute tests at scale with minimal setup and receive actionable feedback in minutes.

What metrics can I track during testing?

You can track an array of critical metrics, including bias, toxicity, hallucinations, effectiveness, and empathy. This comprehensive evaluation helps ensure your AI agents are performing optimally across all dimensions.

OpenAIToolsHub FAQ

How does OpenAIToolsHub stay unbiased?

We maintain a strict editorial policy: we never accept payment for reviews, rankings, or placements. Our revenue comes from affiliate links (clearly disclosed) and sponsorships that do not influence our ratings. Every tool is tested hands-on by our team, and our scores are based on a consistent, transparent evaluation framework focused on real user value.

What is the AI SkillsMap?

The AI SkillsMap is a curated, community-driven directory specifically for Claude Code users. It hosts over 349 skills—which are essentially plugins or custom instructions—that you can browse, install, and use to add new functionalities to your Claude AI. It's the easiest way to find skills for coding, data analysis, content creation, and more.

Are the free developer tools really free with no catch?

Yes, absolutely. There is no account creation, no email sign-up, and no hidden fees. The 33+ developer tools—like the Token Counter, JSON converter, and Regex Tester—are built as simple, client-side web apps. They run directly in your browser, ensuring privacy and instant access. We built them because we needed them ourselves.

How often are the reviews and tool information updated?

We are committed to providing current information. Our content is actively maintained and validated to be accurate as of 2026. We regularly re-test major tools upon significant updates (like new model releases) and update pricing and feature information to ensure our guidance remains relevant and reliable in the fast-moving AI space.

Alternatives

Agent to Agent Testing Platform Alternatives

Welcome to the cutting-edge realm of AI testing with the Agent to Agent Testing Platform, a revolutionary tool in the AI Assistants category that redefines how we evaluate AI agent performance. As organizations race to deploy autonomous AI agents, many users find themselves searching for alternatives due to factors like pricing, feature sets, and specific platform requirements. The landscape of AI testing is ever-evolving, and businesses need solutions that can scale and adapt to their unique environments. When hunting for a suitable alternative, it's crucial to consider factors such as multi-modal capabilities, the complexity of scenario generation, and the ability to assess diverse interactions. Look for platforms that offer robust assurance frameworks and can identify nuanced failures that may arise in real-world applications. With the right choice, you can ensure your AI agents are not just functional but truly exceptional.

OpenAIToolsHub Alternatives

OpenAIToolsHub is your go-to spot in the wild west of AI tool reviews. It's a no-BS platform that puts ChatGPT, Claude, Gemini, and a massive library of other AI products through the wringer with real-world testing. Think of it as the ultimate hype-checker for the AI space. So why would anyone look elsewhere? It's all about fit. Maybe you need deeper dives on a specific niche, like AI for data science, or a platform that's laser-focused on enterprise security. Budget, specific feature needs, or just a different vibe can send you hunting for a new digital sherpa. When you're scouting for an alternative, keep your eyes peeled for a few key things. Look for genuine, hands-on testing, not just regurgitated press releases. Transparency is king—you want to know who's behind the reviews. And make sure the platform covers the specific AI tools and use cases that matter to your grind.